The vulnerability prioritization landscape has evolved dramatically since CVSS was introduced in 2005. What was once a single-score world has become a multi-framework ecosystem where different scoring systems answer fundamentally different questions. Using any single framework in isolation leads to suboptimal prioritization. Understanding how they complement each other is essential for building a program that focuses effort on the vulnerabilities that actually matter.

This article compares five frameworks: CVSS, EPSS, SSVC, KEV, and TRIS™. For each, we examine what it measures, what it misses, and when to use it.

CVSS: The Baseline That Everyone Knows

What It Measures

The Common Vulnerability Scoring System (CVSS) assigns a 0-10 score based on the intrinsic characteristics of a vulnerability. The base score evaluates attack vector (network, adjacent, local, physical), attack complexity, privileges required, user interaction, scope, and impact on confidentiality, integrity, and availability.

CVSS v4.0, released in 2023, added supplemental metrics and refined the scoring formula, but the fundamental approach remains the same: score the theoretical worst-case severity of a vulnerability in isolation.

What It Misses

- Real-world exploitation: CVSS does not consider whether anyone is actually exploiting the vulnerability. A CVE with CVSS 10.0 and zero real-world exploitation is scored identically to one with CVSS 10.0 that ransomware groups are using daily.

- Environmental context: CVSS base scores do not account for your specific environment. A vulnerability in a library you do not use, on a system that is not exposed, still gets the same score.

- Temporal dynamics: While CVSS includes optional temporal metrics, they are rarely populated by vendors. The base score is static from the day the CVE is published.

When to Use It

CVSS is the universal language of vulnerability severity. Use it as a starting input, never as the sole prioritization signal. It is appropriate for initial severity classification and for compliance frameworks that mandate CVSS-based SLAs (FedRAMP, PCI-DSS). But if CVSS is your only prioritization mechanism, you are over-prioritizing theoretical severity and under-prioritizing actual risk.

EPSS: Exploitation Probability

What It Measures

The Exploit Prediction Scoring System (EPSS), maintained by FIRST, assigns each CVE a probability between 0 and 1 representing the likelihood that it will be exploited in the wild within the next 30 days. EPSS is a machine learning model trained on real-world exploit telemetry: honeypot data, dark web feeds, proof-of-concept availability, social media chatter, and historical exploitation patterns.

EPSS scores are updated daily, meaning they reflect the current threat landscape rather than a static assessment from the day the CVE was published.

The Data Is Compelling

- Only ~4% of published CVEs are ever exploited in the wild. EPSS identifies which 4%.

- The top 10% of CVEs by EPSS score account for over 85% of observed exploitations.

- CVSS alone correctly identifies the exploited vulnerability only 53% of the time when used as a binary high/critical threshold. EPSS achieves 89% true positive rate at the same coverage level.

What It Misses

- Your specific environment: EPSS is a population-level model. It predicts general exploitation probability, not whether your organization specifically will be targeted.

- Targeted attacks: EPSS is trained on mass-exploitation data. A zero-day used exclusively by an APT group targeting your industry may have a low EPSS score because it is not broadly exploited.

- Impact severity: EPSS tells you how likely exploitation is, not how bad the consequences would be. A highly likely exploit of a low-impact information disclosure is different from a highly likely exploit of a remote code execution.

When to Use It

EPSS should be a core input to every prioritization decision. It is the best available signal for "is someone actually going to exploit this?" Use it to separate the 96% of CVEs that will never be exploited from the 4% that will. Pair it with CVSS (for impact) and environmental context (for relevance) to build a complete picture.

SSVC: Decision-Focused Prioritization

What It Measures

The Stakeholder-Specific Vulnerability Categorization (SSVC) framework, developed by CERT/CC and CISA, takes a fundamentally different approach from numeric scoring. Instead of assigning a number, SSVC produces a decision outcome: Defer, Scheduled, Out-of-Cycle, or Immediate.

SSVC evaluates vulnerabilities through a decision tree with four inputs:

- Exploitation status: None, PoC available, or active exploitation

- Technical impact: Partial or total

- Automatable: Can the exploit be automated at scale?

- Mission prevalence: How important is the affected system to your mission?

The decision tree evaluates these inputs and produces an action recommendation rather than a numeric score. This design reflects the reality that vulnerability response is a series of binary decisions (patch now or later?), not a continuous spectrum.

What It Misses

- Granularity within categories: All "Immediate" findings are treated equally. In a queue of 50 "Immediate" items, SSVC does not tell you which to start with.

- Automated enrichment: SSVC requires human judgment for several inputs (mission prevalence, automatable). This makes fully automated SSVC scoring difficult at scale.

- Temporal updates: SSVC decisions should be re-evaluated as exploitation status changes, but the framework does not prescribe a re-evaluation cadence.

When to Use It

SSVC is excellent for organizations that want a decision framework rather than a scoring system. It is particularly useful for government agencies (CISA recommends it) and for security teams that need to justify prioritization decisions to auditors. Use SSVC as the decision framework and feed EPSS data into the "exploitation status" input for the most accurate results.

CISA KEV: The Confirmed Exploitation Signal

What It Measures

The CISA Known Exploited Vulnerabilities (KEV) catalog is a curated list of CVEs that CISA has confirmed are being actively exploited in the wild. It is not a scoring system. It is a binary signal: a CVE is either in the KEV catalog or it is not.

KEV entries include a remediation due date (typically 14-21 days from addition) that is mandatory for federal civilian agencies under BOD 22-01 and serves as a best-practice SLA target for all organizations.

What Makes KEV Unique

- Confirmed exploitation: Unlike EPSS (which predicts exploitation probability), KEV confirms that exploitation is happening right now. There is no uncertainty.

- Curation quality: CISA applies a high bar for KEV inclusion. Each entry requires confirmed exploitation evidence, an assigned CVE, and actionable remediation. This keeps the catalog focused and trustworthy.

- Actionability: Every KEV entry has a known remediation action (patch, workaround, or mitigation). There are no "we know it is exploited but there is nothing you can do" entries.

What It Misses

- Coverage: KEV contains approximately 1,200 entries out of 200,000+ CVEs. Many exploited vulnerabilities, particularly those used by sophisticated APT groups, are never added to KEV because CISA cannot publicly confirm exploitation without compromising intelligence sources.

- Speed: There is often a lag between initial exploitation and KEV addition. By the time a CVE appears in KEV, exploitation may have been occurring for weeks or months.

- Severity differentiation: All KEV entries are equally "confirmed exploited." The catalog does not differentiate between a CVE exploited by script kiddies and one used in targeted nation-state campaigns.

When to Use It

KEV should be a hard override in any prioritization framework. If a CVE is in the KEV catalog, it goes to the front of the remediation queue regardless of its CVSS score, EPSS probability, or SSVC decision. KEV status should trigger immediate SLAs with escalation paths to senior leadership.

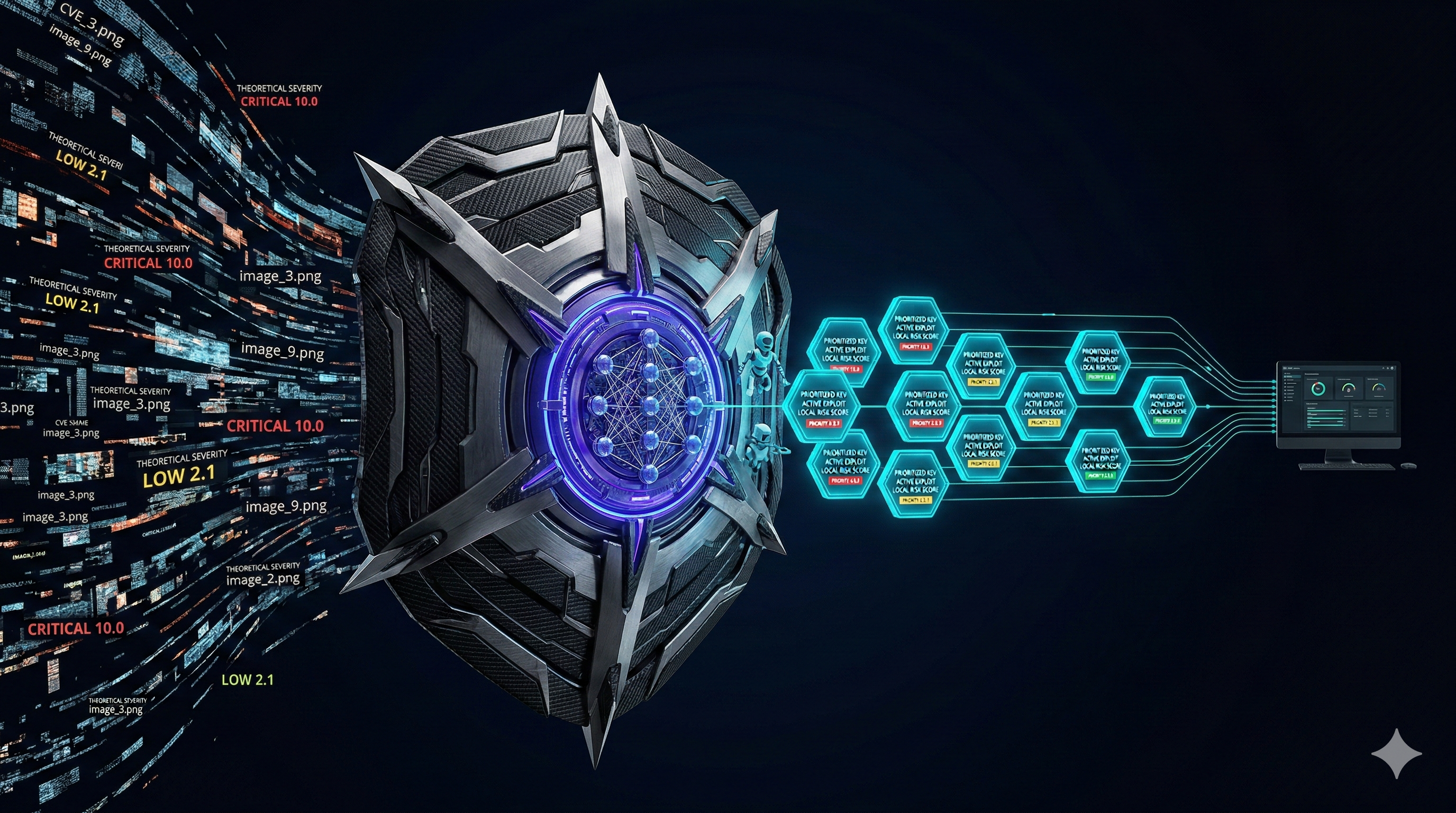

TRIS™: The Composite Intelligence Score

What It Measures

The TrueRisk Intelligence Score (TRIS™), developed by CVEasy AI, is a composite scoring framework that fuses multiple intelligence sources into a single 0-100 score. It was designed to answer the question that no single framework answers alone: "What is the actual risk of this vulnerability to my specific organization, right now?"

TRIS v2 incorporates twelve layers of intelligence (Patent Pending):

- Base severity (CVSS): The theoretical maximum impact, normalized to a 0-100 scale

- Exploit probability (EPSS): The likelihood of real-world exploitation within 30 days

- Active exploitation (KEV): Confirmed exploitation status from the CISA KEV catalog, applied as a hard multiplier

- Threat actor targeting: 49+ tracked APT groups, their toolkits, and their target sectors

- Asset criticality: Auto-classified business importance from crown-jewel to test environments

- Public exposure topology: Internet-facing vs internal vs air-gapped, with port and service context

- BASzy exploit validation: Does the attack actually work in your specific environment?

- Attack path blast radius (new in v2): Graph-based lateral movement scoring; how many assets can this vulnerability reach?

- Supply chain dependency propagation (new in v2): SBOM-aware transitive risk across your dependency tree

- Defense efficacy coefficient (new in v2): MITRE ATT&CK technique coverage of the exploitation chain by your actual controls

- Predictive threat trajectory (new in v2): Forward-looking momentum, is this vulnerability accelerating toward weaponization right now?

- Financial impact quantification (new in v2): FAIR-based dollar-value loss including primary, secondary, and productivity impact

TRIS v2 produces five action bands:

- ACT (90-100): Remediate within 24 hours. Active exploitation confirmed or imminent. Critical asset exposure.

- ATTEND (75-89): Remediate within 72 hours. High exploitation probability or significant compliance impact.

- TRACK (50-74): Remediate within 30 days. Moderate risk that should be monitored and scheduled for remediation.

- MONITOR (25-49): Remediate within 90 days. Low risk under current conditions. Review if threat landscape changes.

- INFORMATIONAL (0-24): Document and move on. Noise under the threshold of action.

| Capability | CVSS | EPSS | SSVC | KEV | TRIS™ |

|---|---|---|---|---|---|

| Severity assessment | Yes | No | Partial | No | Yes |

| Exploitation probability | No | Yes | Partial | Binary | Yes |

| Environmental context | Optional | No | Yes | No | Yes |

| Dynamic updates | No | Daily | Manual | Yes | Real-time |

| Actionable output | Score | Probability | Decision | Yes/No | Score + SLA |

| Fully automatable | Yes | Yes | Partial | Yes | Yes |

Building a Composite Prioritization Strategy

No single framework provides complete prioritization. The most effective programs layer multiple frameworks to compensate for individual weaknesses:

- Start with CVSS as the baseline severity input. It provides the "how bad could this be" dimension.

- Layer EPSS for exploitation probability. This separates the theoretical from the likely. Filter out CVEs with EPSS below 0.1 (10%) for immediate attention and focus resources on the higher-probability findings.

- Apply KEV as a hard override. Any CVE in the KEV catalog jumps to the top of the queue regardless of its CVSS or EPSS score.

- Add environmental context through asset criticality, industry-specific threat data, and compliance requirements. This is what makes the prioritization specific to your organization.

- Use SSVC for decision documentation when you need to justify prioritization decisions to auditors or leadership. The decision tree format provides clear rationale.

Real-World Example: The Same CVE, Five Frameworks

Consider a hypothetical CVE affecting a widely-used web framework. It allows remote code execution via a crafted HTTP request. A proof-of-concept has been published on GitHub, and it has been added to the CISA KEV catalog after ransomware groups began exploiting it.

- CVSS: 9.8 Critical. Network-accessible, no privileges required, no user interaction, high impact across CIA. This score is identical whether you run the framework or not.

- EPSS: 0.97 (97%). The model predicts near-certain exploitation based on the public PoC, KEV status, and observed telemetry. This differentiates it from the thousands of other 9.8 CVEs that are never exploited.

- SSVC: Immediate. Active exploitation is confirmed. Technical impact is total. The exploit is automatable. If the affected system supports a mission-critical function, the decision is unambiguous.

- KEV: Listed. This is a binary "drop everything" signal. CISA has confirmed active exploitation and assigned a remediation deadline.

- TRIS™: 96 (ACT band). The combination of high CVSS, extreme EPSS, KEV status, and a public exploit against a critical-tier asset produces the highest possible urgency. 24-hour SLA with automatic escalation to the CISO.

Now consider a different scenario: a CVE in a PDF parsing library. CVSS rates it 9.1 Critical (arbitrary code execution when processing a malicious PDF). But EPSS is 0.002 (0.2%), it is not in KEV, no PoC has been published, and the affected library is only used in a development tool, not in production.

- CVSS: 9.1 Critical. Same urgency signal as the actively-exploited web framework CVE above.

- EPSS: 0.002. Virtually zero probability of exploitation in the next 30 days.

- SSVC: Scheduled. No exploitation, partial mission relevance, not automatable without a PoC.

- KEV: Not listed.

- TRIS™: 28 (MONITOR band). Low EPSS, no KEV, non-production asset. Remediate within 90 days.

The gap between these two scenarios is invisible to CVSS-only prioritization. Both are "Critical." One is an emergency. The other can wait three months. The composite frameworks make this distinction clear.

The Bottom Line

The vulnerability prioritization landscape has matured significantly beyond CVSS. Each framework addresses a specific dimension of risk: CVSS measures severity, EPSS predicts exploitation, SSVC structures decisions, KEV confirms active threats, and TRIS™ fuses them all into an organization-specific composite score.

The organizations that still prioritize by CVSS alone are spending most of their remediation effort on vulnerabilities that will never be exploited while ignoring medium-severity CVEs that ransomware groups are actively using. The organizations that layer these frameworks achieve dramatically better outcomes with the same remediation capacity.

The evolution is clear: from single-score severity to multi-signal intelligence. The only question is how quickly your organization makes the transition.